Introduction

In manufacturing and industrial automation, every sensor reading, machine signal, and test result is raw material for an ML model. Data acquisition (DAQ) for machine learning is the systematic process of collecting, measuring, and preparing that raw data so it's suitable to train, validate, and improve those models. Capture it poorly, and the models that follow will be unreliable from day one.

The cost of getting this wrong is measurable: Gartner estimates poor data quality costs enterprises $12.9 million annually, and MIT reports that 95% of generative AI pilots produce zero measurable ROI, with data readiness cited as the primary failure point.

In manufacturing environments where Controlink Systems has supported high-speed process monitoring, end-of-line testing, and shop-floor automation since 1998, the continuous streams of structured sensor and machine data are powerful ML training assets. They only deliver that value when captured with ML intent from the outset.

This guide explains what the DAQ process is, why it is critical for ML performance, how it works end-to-end, what methods exist, and what commonly goes wrong.

TLDR

- Data acquisition is the first and most consequential step in any ML workflow—model performance is bounded by data quality, not algorithm choice

- In manufacturing environments, DAQ sources include sensors, PLCs, machine controllers, and networked devices

- The pipeline runs from signal conditioning and analog-to-digital conversion through logging and preprocessing into ML-ready datasets

- Four primary acquisition methods exist: direct sensing, API/database extraction, manual entry, and open datasets — each with distinct tradeoffs

- Most underperforming ML models fail because of DAQ problems, not model architecture

What Is Data Acquisition in Machine Learning?

Data acquisition in the ML context is the process of identifying, gathering, and compiling data from real-world or digital sources into a form that machine learning algorithms can ingest—going beyond simply recording numbers to ensuring that data is representative, sufficient, and consistent.

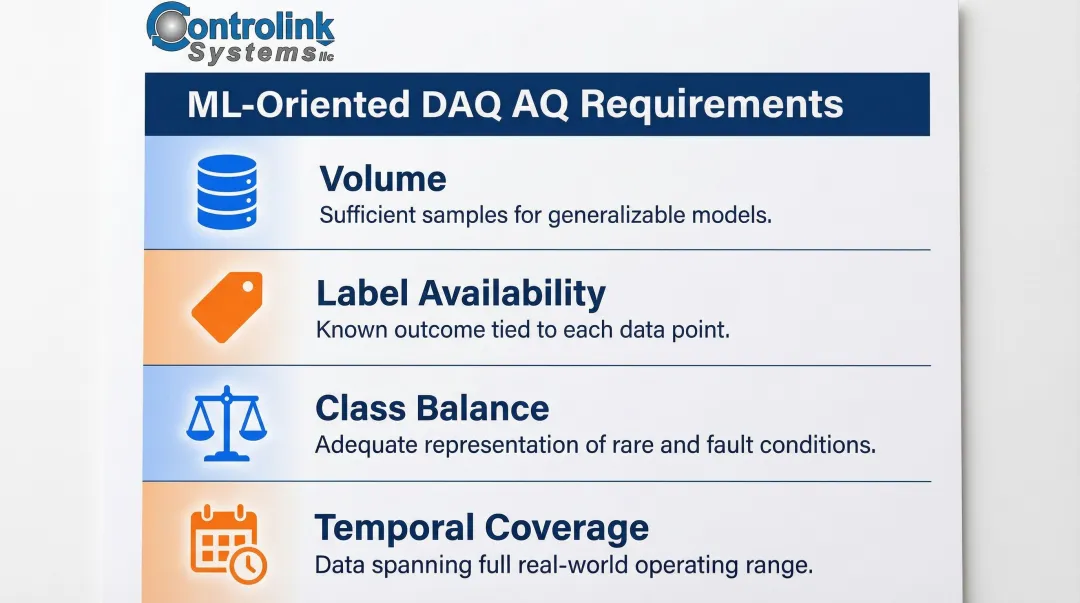

ML-oriented DAQ is distinct from general measurement. Traditional DAQ focuses on accurate real-time readings for monitoring or control. ML-oriented DAQ goes further, requiring attention to:

- Volume: Enough samples to train a generalizable model

- Label availability: Whether each data point carries a known outcome

- Class balance: Adequate representation of rare or fault conditions

- Temporal coverage: Data spanning the range of real-world operating scenarios

A vibration sensor monitoring a bearing might sample at 2 kHz for control purposes, but detecting early-stage bearing faults requires sampling at 25.6 kHz or higher to capture high-frequency structural resonances.

DAQ is not the same as data preprocessing or feature engineering, though those steps follow immediately after. The acquisition phase determines what information is physically captured and logged; preprocessing transforms that logged data into training-ready formats.

Why Data Acquisition Matters for ML in Manufacturing and Industrial Environments

The "garbage in, garbage out" principle is absolute in industrial ML. Biased, incomplete, or low-resolution sensor data produces models that fail when deployed on the shop floor, in end-of-line testing, or in predictive maintenance scenarios. Data scientists spend 37.75% to 60% of their time cleaning and organizing data, validating the practitioner rule that data preparation consumes the vast majority of ML project effort.

Manufacturing's Specific Demands on DAQ

Manufacturing places unique demands on DAQ for ML:

- High sampling rates for vibration and process signals

- Synchronized timestamps across multiple channels

- Structured labels tied to known machine states (fault vs. normal)

- Data captured across full operating ranges—not just nominal conditions

Without a deliberate DAQ strategy, failure modes compound quickly. Models trained only on normal-operation data fail to detect anomalies, while those trained on data from one machine generalize poorly to others. Intermittent sensor faults create silent corruption in the training set that won't surface until deployment.

The cost is real: in 2022, Unity Technologies reported approximately $110 million in lost revenue after inaccurate data ingestion corrupted datasets used to train predictive machine learning models.

Regulatory and Quality Dimensions

In industries such as aerospace, medical devices, and automotive, data used to train inspection or process-control models may need to meet traceability and documentation requirements:

- FDA 21 CFR Part 11 requires secure, time-stamped audit trails that independently record the date and time of actions that create, modify, or delete electronic records

- ISO 13485:2016 mandates software validation procedures for quality management systems, scaled to the level of risk involved

- AS9100D requires measurement traceability to national or international standards

- IATF 16949:2016 requires documented record retention policies — production part approvals and test data must be kept for the active production duration plus one calendar year

DAQ architecture decisions made early in a project determine whether the resulting dataset will satisfy these requirements — or require expensive rework later.

High-speed process monitoring, EOL test systems, and CNC/shop-floor automation environments naturally generate continuous streams of structured sensor and machine data. Controlink Systems has operated in exactly these environments since 1998, and when that data is captured with ML intent from the outset, it becomes a powerful training asset rather than an afterthought.

How the Data Acquisition Process Works for ML

The DAQ process flows from physical phenomena through multiple transformation stages into ML-ready datasets. Each stage introduces potential failure points that directly impact model performance.

Step 1: Sensing and Signal Conditioning

Sensors or transducers convert physical parameters—temperature, vibration, pressure, current draw, cycle time—into electrical signals. Engineers then apply signal conditioning — amplification, filtering, linearization — to keep the signal clean and compatible with downstream digitization.

Inadequate conditioning is a hidden source of training data corruption. Electrical noise, temperature-induced sensor drift, vibration interference between adjacent machines, and intermittent network connectivity all introduce variability. Each must be managed — or at minimum documented — before the data is used for ML training.

Key conditioning requirements:

- Two-wire twisted and shielded cables to prevent electromagnetic interference (EMI)

- Shield grounding at one point only (typically at signal source) to prevent ground loops

- Anti-aliasing low-pass filters before ADC conversion

- Amplification matched to ADC input range for maximum resolution

Step 2: Analog-to-Digital Conversion and Logging

An ADC samples the conditioned analog signal at a defined rate and resolution, converting it to discrete digital values. A data logger then stores these values with timestamps either locally or to a connected system.

Sampling rate and ADC resolution determine what patterns an ML model can learn from the data. The Nyquist-Shannon sampling theorem requires sampling at greater than twice the highest frequency component, but practical industrial DAQ requires sampling at 5 to 10 times the highest frequency of interest due to physical limitations of analog filters.

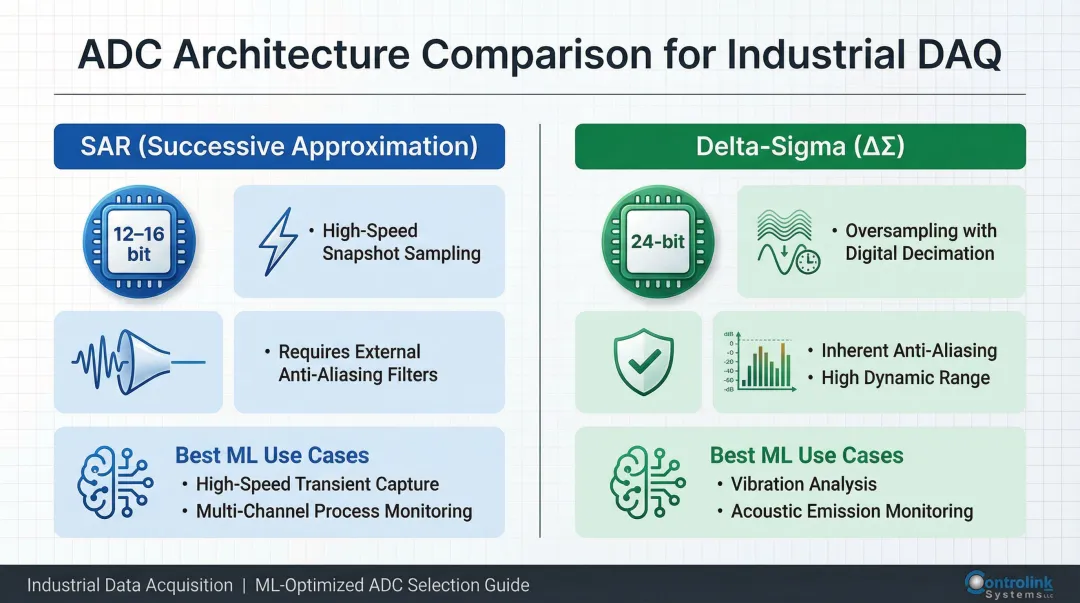

Two primary ADC architectures serve industrial DAQ:

| Architecture | Resolution | Key Characteristics | Ideal ML Use Cases |

|---|---|---|---|

| SAR (Successive Approximation) | 12-16 bit | High-speed snapshot sampling; requires external anti-aliasing filters | High-speed transient capture; multi-channel process monitoring |

| Delta-Sigma (ΔΣ) | 24-bit | Oversampling with digital decimation; inherent anti-aliasing; high dynamic range | High-resolution vibration analysis; acoustic emission monitoring |

For vibration-based ML, 24-bit Delta-Sigma ADCs offer a clear advantage: built-in anti-aliasing and high dynamic range prevent high-frequency noise from corrupting training data.

Step 3: Transfer, Labeling, and Preprocessing

Once logged, raw data must move out of the acquisition system before it can train a model. That means exporting to a usable format (CSV, database, time-series store), labeling with outcome or event information, and preprocessing before splitting into training and validation sets.

Labeling is where most industrial ML projects stall. In manufacturing environments, annotation errors stem from:

- Vague defect boundaries with no clear pass/fail threshold

- Diverse material appearances across part runs or shifts

- Human annotator inconsistency under production pressure

- Severe class imbalance — normal data can outnumber fault events by 99:1 or more

Preprocessing includes normalization, resampling, and cleaning. However, preprocessing cannot compensate for fundamental acquisition failures: missing data, insufficient sampling rates, or uncalibrated sensors.

Key Methods and Sources for Acquiring ML Training Data

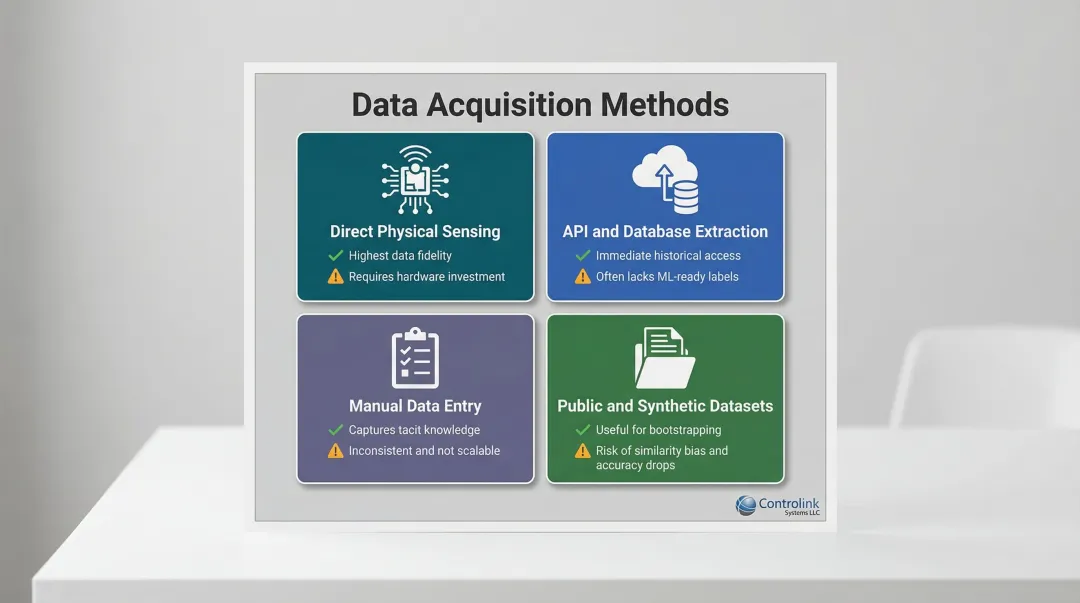

Four primary data acquisition methods serve industrial ML, each with distinct tradeoffs:

1. Direct Physical Sensing via DAQ Hardware

The highest-fidelity method for manufacturing ML. DAQ hardware connected to machines, processes, or test fixtures captures raw sensor signals at controlled sampling rates with known resolution and calibration status.

Advantages:

- Complete control over sampling parameters

- Known sensor calibration and traceability

- Ability to capture rare fault conditions deliberately

Disadvantages:

- Requires upfront hardware investment

- Sensor installation may require process downtime

- Data volume can be substantial (25.6 kHz vibration data generates 2.3 GB per channel per hour)

2. API and Database Extraction

A fast path to historical data already in digital form. Extraction from existing MES, SCADA, ERP, or historian systems provides immediate access to months or years of operational data.

Advantages:

- Immediate availability of historical data

- No new sensor installation required

- Often includes contextual metadata (production schedules, maintenance records)

Disadvantages:

- Data was collected for process control, not ML training: it often lacks labels, contains gaps, and was sampled at rates not suited to ML objectives

- Timestamp synchronization across systems may be inconsistent

- Sensor calibration history may not be documented

These first two methods cover most automated data sources. When the attribute you need simply can't be measured by a sensor, the next method becomes necessary.

3. Manual Data Entry and Structured Observation

Slower and error-prone, but sometimes the only option for capturing human-assessed attributes such as visual defect classifications, operator observations, or expert judgments.

Advantages:

- Captures tacit knowledge not available from sensors

- Flexible for attributes that cannot be automated

Limitations:

- High labor cost and time consumption

- Inconsistent labeling between operators

- Not scalable to high-volume production

4. Public, Open-Source, and Synthetic Datasets

Useful for bootstrapping models when proprietary data is scarce, but must be validated for relevance to the specific industrial context.

Critical limitation: Models trained on benchmark datasets like CWRU suffer severe accuracy drops when deployed in real-world industrial environments. Researchers have identified a "similarity bias" flaw: the dataset reuses the same physically faulted bearings across different speed/load conditions. Models learn test-rig specific features rather than generalized fault features. When evaluated on independent bearings, classification accuracy drops from >95% to 50-60%.

IoT and Networked Device Data

The four methods above define what data you collect. How that data travels from the machine to your ML pipeline — particularly in Methods 1 and 2 — often depends on the industrial network protocol in use. Machine controllers, PLCs, and networked shop-floor devices continuously emit operational data, and systems that interface with protocols such as Modbus, Profinet, EtherCAT, and serial communications can serve as structured, continuous DAQ pipelines feeding ML training workflows.

Protocol performance varies dramatically:

| Protocol | Min. Cycle Time | Jitter/Sync | Suitability for ML DAQ |

|---|---|---|---|

| EtherCAT | < 100 µs (down to 12.5 µs) | << 1 µs | Excellent: Ideal for high-rate, deterministic continuous waveform streaming |

| PROFINET IRT | 31.25 µs | 1 µs | Excellent: Hardware-scheduled bandwidth allows reliable high-speed DAQ |

| EtherNet/IP (CIP Sync) | Millisecond range | < 100 ns (via IEEE-1588) | Good: Excellent time-stamping for multi-axis alignment |

| Modbus TCP/RTU | 25-50 ms per device | Highly variable | Poor: Only suitable for low-rate periodic telemetry |

Do not attempt to stream 25 kHz vibration data over Modbus. High-frequency ML DAQ requires EtherCAT or PROFINET IRT — anything less introduces packet loss and timing gaps that corrupt the training data before it ever reaches your model.

Factors That Affect Data Acquisition Quality for ML

Volume and Diversity

Training data must cover all operating conditions, not just nominal states. A dataset skewed 95% toward normal operation will produce a model that misses the fault conditions it was built to catch — the rare-but-critical events where ML adds the most value. As a rule of thumb, aim for balanced representation across fault classes, even if that requires synthetic augmentation or targeted collection campaigns for underrepresented conditions.

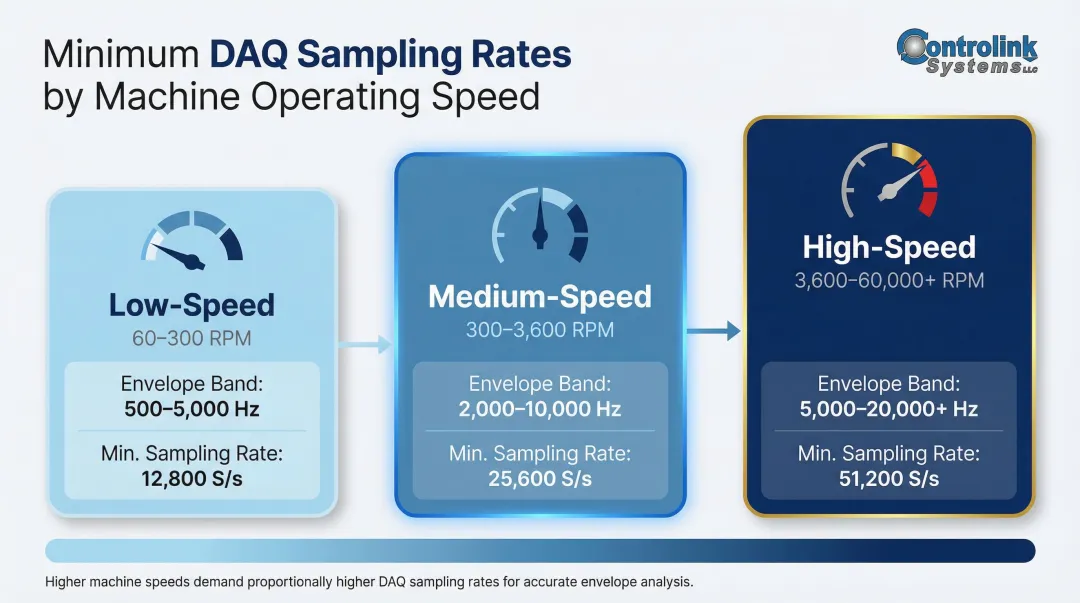

Sampling Rate and Resolution

Sampling rate must match the frequency of the signal of interest. Vibration data for bearing fault detection requires sampling at 25.6 kS/s or higher to capture 2-10 kHz structural resonances. Standard low-power IoT sensors sampling at 1-2 kHz are blind to early-stage bearing defects.

| Machine Speed | Envelope Analysis Band | Minimum Sampling Rate |

|---|---|---|

| Low-Speed (60–300 RPM) | 500–5,000 Hz | 12,800 S/s |

| Medium-Speed (300–3,600 RPM) | 2,000–10,000 Hz | 25,600 S/s |

| High-Speed (3,600–60,000 RPM) | 5,000–20,000+ Hz | 51,200 S/s or higher |

Label Quality and Consistency

Mislabeled data degrades supervised models faster than missing data. In industrial settings, label noise stems from vague defect boundaries and inconsistent human annotation — a technician calling one vibration signature "normal wear" and another calling it "early fault" introduces errors the model will memorize as truth.

Frameworks like Confident Learning help identify these errors by estimating which labels are likely wrong based on the model's own predicted probabilities, without requiring a clean reference set.

Sensor Calibration and Maintenance

Drifting or uncalibrated sensors introduce systematic errors into training data without triggering obvious alerts:

- Industrial piezoelectric accelerometers experience sensitivity loss of less than 0.5% per year

- Type K thermocouples experience "aging" between 600°F and 1,200°F, causing higher-than-accurate readings

- Platinum RTDs operated at 500°C can drift by as much as 0.35°C after 1,000 hours

ISO/IEC 17025 requires that measuring equipment be calibrated against measurement standards traceable to international or national standards. For critical mass and temperature standards, NIST recommends initial intervals of 12 to 36 months, adjusted based on control charts and historical data.

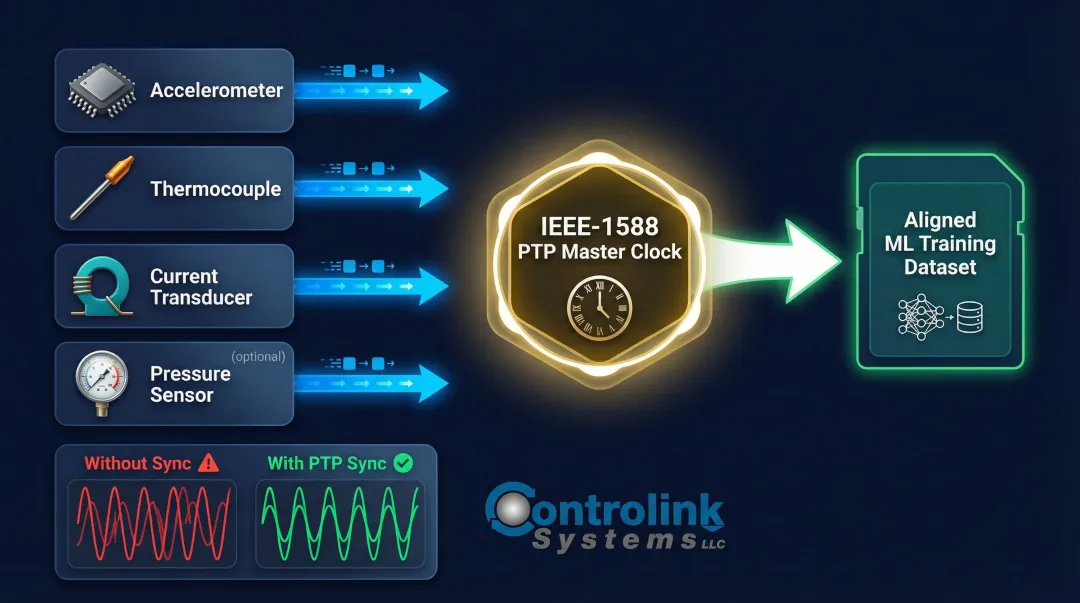

Data Synchronization Across Channels

Misaligned timestamps between sensors measuring the same event create artifacts that corrupt feature engineering and confuse ML models. In multi-axis systems or distributed sensor networks, clock synchronization via IEEE-1588 Precision Time Protocol (PTP) keeps all channels within sub-microsecond alignment — a hard requirement when cross-correlating channels to detect faults that appear across multiple axes simultaneously.

Common Misconceptions and When Data Acquisition Falls Short

Misconception 1: "More Data Always Means Better Models"

Volume cannot compensate for poor relevance or labeling accuracy. A million samples of normal operation do not teach a model to detect rare faults. Class imbalance and distributional mismatch — training data that doesn't reflect actual operating conditions — cause more damage than insufficient volume alone.

Misconception 2: "Existing Monitoring Data Is Automatically ML-Ready"

Data collected for process control was sampled at rates suited to control loops, not ML objectives. It often lacks labels, has gaps, and was not synchronized across channels. Timestamp formats may be inconsistent. Sensor calibration history may not be documented.

Misconception 3: "DAQ Hardware Alone Determines Data Quality"

A 24-bit ADC sampling at 100 kHz still produces unusable data if:

- The sensor is mounted in the wrong location

- The anti-aliasing filter is misconfigured

- Data is never labeled with ground-truth fault states

Software configuration, sensor placement, and labeling workflows matter just as much as the hardware itself.

When Standard DAQ Approaches Are Insufficient

Standard DAQ fails ML when:

- Phenomena of interest are rare events with very low occurrence frequency (insufficient positive examples for classification)

- Data from one environment is used to train a model deployed in a different environment without domain adaptation

- Required labels can only be assigned retrospectively by subject-matter experts who are unavailable

The 80/20 Reality of ML Projects

A 2016 survey by Forbes/CrowdFlower found that 80% of ML project effort goes toward data acquisition, cleaning, and labeling — not model selection or tuning. Teams that underinvest in DAQ strategy and expect the algorithm to compensate are consistently disappointed.

The model is not the bottleneck. The data is. A well-structured DAQ process delivers deployable ML systems. A poorly structured one — regardless of model sophistication — produces failed experiments.

Frequently Asked Questions

What is data acquisition in machine learning?

Data acquisition in ML is the process of collecting and compiling data from sensors, databases, APIs, or manual sources so that it can be used to train and validate machine learning models. It sits at the start of the ML workflow and directly determines what information gets captured, logged, and made available for training.

What are the 4 methods of data acquisition?

The four main methods are direct physical sensing (sensors/DAQ hardware), API and database extraction, manual data entry and structured observation, and use of public or synthetic datasets. Most industrial ML projects combine two or more — direct sensing for high-fidelity signals, database extraction for contextual metadata.

What is the 80 20 rule in machine learning?

The 80/20 rule in ML refers to the widely observed pattern where roughly 80% of project effort goes into data collection, cleaning, and preparation, while only 20% goes into model building and tuning. This makes data acquisition strategy the most important determinant of project success.

What is the difference between a DAQ system and a machine learning data pipeline?

A DAQ system captures and records physical or digital signals in real time (hardware + logging software), while an ML data pipeline transforms that stored data—cleaning, labeling, feature engineering—into a format suitable for model training. A DAQ system is the upstream input to an ML pipeline.

How does data quality affect machine learning model performance?

Data quality issues — inaccurate labels, missing values, sensor drift, and poor coverage of operating conditions — directly degrade model accuracy and reliability. In practice, improving data quality yields larger performance gains than switching to a more complex algorithm.

What sensors are commonly used for data acquisition in manufacturing ML applications?

Common types include accelerometers (vibration/bearing faults), thermocouples or RTDs (thermal monitoring), current transducers (motor health), pressure sensors (hydraulic systems), and encoders (position and speed). The right choice depends on which physical parameter is most predictive of the target outcome.