Introduction

Manufacturing and operational environments generate massive amounts of data daily—sensor readings, CNC program files, process parameters, quality logs, test results. The real risk is acting on data that's inaccurate, outdated, or inconsistent—without knowing it.

Any of the following can trigger scrap events, quality escapes, or compliance violations before anyone catches the problem:

- A wrong program revision reaching a machine

- A sensor reading outside acceptable limits that goes undetected

- A duplicate batch record inflating output metrics

This article focuses on why data health monitoring tools matter in practice for enterprise operations, not just in theory. Specifically, we examine the measurable outcomes they drive across quality, efficiency, and cost—and what happens when enterprises operate without them.

TLDR

- Data health monitoring tools continuously evaluate whether enterprise data is accurate, complete, and consistent—catching problems before they affect decisions or operations

- Key benefits include early error detection, improved decision-making, lower operational costs, reduced scrap/rework, and regulatory compliance support

- Skip active monitoring and you're stuck doing damage control after the fact—fixing problems that could have been caught upstream

- Value compounds over time: sustained monitoring builds a more reliable, self-correcting data infrastructure

What Is Data Health Monitoring?

Data health monitoring is the ongoing process of evaluating whether an organization's data meets defined quality standards—checking for accuracy, completeness, consistency, timeliness, and conformity—so the data driving operations and decisions can be trusted.

In enterprise environments, this applies wherever data flows across systems: from production floor sensors and CNC program files to ERP records, quality logs, and test results. It's not limited to IT databases; it covers any data that influences operational outcomes.

Data health monitoring is not a reporting exercise. It's the mechanism ensuring that the information guiding production, quality control, and business decisions is reliable enough to act on. In practice, this validation runs continuously across every data touchpoint:

- Confirms a CNC program file is the correct revision before transmission to the machine

- Verifies sensor-reported process parameters fall within acceptable ranges

- Checks quality records for completeness and consistency with other system data

Without this ongoing validation, enterprises assume data is correct until an error surfaces downstream—often after it has already cost time, material, or product quality.

Key Advantages of Data Health Monitoring Tools for Enterprises

Each advantage below is tied to outcomes enterprises actually track—cost, quality, efficiency, risk, and uptime—not abstract capabilities.

Advantage 1: Early Detection of Data Errors Before They Cascade into Costly Mistakes

Data health monitoring tools continuously scan incoming and stored data against predefined rules and thresholds, catching errors—missing values, out-of-range entries, duplicate records, format mismatches—at the point of entry or ingestion rather than after they've been used to make decisions.

How this works in practice:

In manufacturing contexts, this means:

- Flagging a wrong revision of a CNC program file before it reaches the machine

- Catching a sensor reading outside acceptable process parameters before it skews a quality report

- Identifying a duplicate batch record before it inflates output metrics

Why this is an advantage:

Errors caught early are exponentially cheaper to fix than errors discovered after production has run on bad data. The 1-10-100 rule of data quality dictates that preventing an error at the source costs $1, correcting it downstream costs $10, and dealing with a failure costs $100. In manufacturing, catching a wrong CNC program revision at the machine level is a minor fix; caught after production, it becomes scrap.

When data errors are caught in real time, quality decisions are made on accurate information, not on data that appeared valid but wasn't. For example, CNC programming errors—such as incorrect tool paths, improper feed rates, or miscalculated offsets—directly increase scrap rates and cause dimensional inaccuracies. Standard scrap rates in CNC machining range from 0.5% to 4%, but can reach 3% to 6% for high-precision work.

KPIs impacted and when this matters most:

- Primary KPIs: Error rate per data source, scrap rate, rework frequency, mean time to detect a data issue

- Highest impact: High-volume manufacturing environments where data flows rapidly across multiple systems (ERP, MES, CNC controllers, testing equipment) and a single bad data point can trigger a chain reaction across production runs

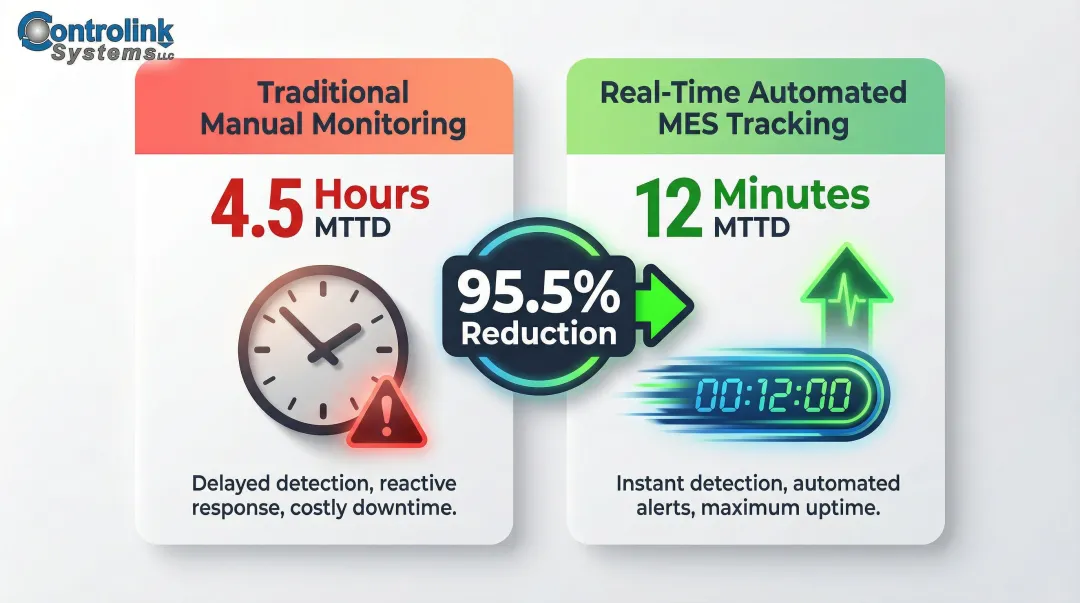

Reducing Mean Time to Detect:

Traditional manual downtime logs and end-of-shift reports suffer from latency, often identifying issues hours after they occur. Research shows that implementing real-time automated MES tracking reduced the Mean Time to Detect (MTTD) for system-induced production drops from 4.5 hours to just 12 minutes—a 95.5% improvement.

Advantage 2: Consistent, Reliable Data That Enables Confident Operational Decision-Making

Operational decisions are only as reliable as the data behind them. Data health monitoring enforces consistency across systems: field definitions stay uniform, values stay within expected ranges, and records stay complete — so every team is working from the same baseline.

How this works in practice:

In enterprise operations, different teams often make decisions about machine scheduling, tooling changes, process adjustments, or quality holds while pulling from different systems. Without enforced consistency, those teams operate from conflicting data states — and contradictory actions follow.

Consider a scenario where a quality team clears a batch while production data shows a process deviation neither team caught. Without monitoring, these contradictory data states persist until a customer complaint or audit exposes the gap.

Why this is an advantage:

Poor data quality costs organizations an average of $12.9 million annually. Inaccurate data causes 27% of strategic errors in Fortune 500 companies, and 45% of business data used for decisions is considered incomplete or inaccurate.

When teams pull from unverified data, they often reach conclusions that make sense in isolation but conflict at the operational level — scheduling a run on a machine that quality data already flagged for hold, for instance. Monitoring creates a shared, auditable record that prevents those gaps from compounding.

Bridging the ERP-MES disconnect:

ERP systems are designed to be a single source of truth, but data inaccuracy causes ERP failure when users stop trusting the system and parallel records emerge. Without live integration with shop-floor systems like MES or SCADA, ERPs stay blind to real-time production. If a machine breaks down and data isn't pushed upstream, schedules and inventory plans remain outdated until someone manually catches it.

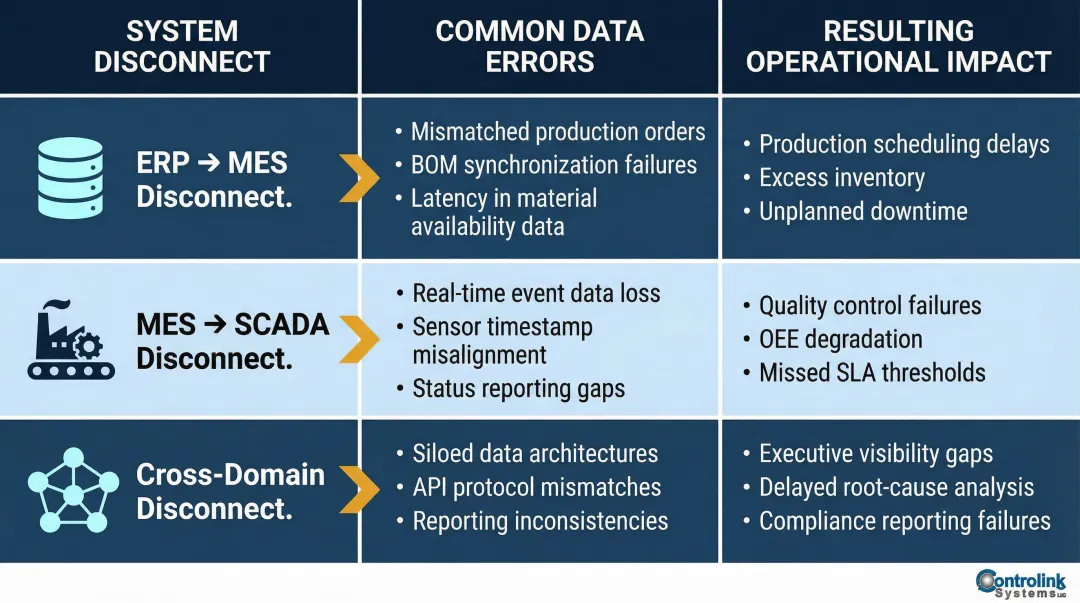

Common system disconnects and their impacts:

| System Disconnect | Common Data Errors | Resulting Operational Impact |

|---|---|---|

| ERP to MES | Unclear ownership of master data (BOMs, routings); time granularity mismatches | Mismatched inventory, reporting discrepancies, automated bad information |

| MES to SCADA | Lack of real-time synchronization; batch data updates instead of live feeds | Outdated schedules, manual workarounds, delayed decision-making |

| Cross-Domain | Inconsistent vendor/customer names; fragmented data islands | Misattributed spend, failed compliance audits, manual reconciliation wasting 40% of effort |

KPIs impacted and when this matters most:

- Primary KPIs: Data consistency score across systems, decision lead time, quality escape rate, customer complaint rate tied to data-driven errors

- Highest impact: Enterprises running multi-system environments—where CNC machines, PLCs, SQL databases, and testing platforms all generate data that must align for operations to function correctly

Advantage 3: Reduced Operational Costs Through Automated Data Validation

Manual validation is slow, inconsistent, and expensive. Automated data health monitoring runs continuously in the background — checking for correct values, flagging anomalies, and generating alerts — without pulling operators away from production work.

How this works in real-world manufacturing:

Instead of relying on operators or quality staff to manually spot-check records and program files, Survey data reveals that professionals spend an average of more than 9 hours per week manually transferring data into digital systems, costing U.S. businesses an average of $28,500 per employee per year.

Tools like Controlink's process monitoring and CNC/DNC communication software are designed to ensure machinists are always working from the latest engineering-approved files—cutting the risk of running incorrect programs and the scrap costs that follow.

The baseline burden of scrap and rework:

APQC benchmarks show scrap and rework costs represent a median of 5.0% of Cost of Goods Sold (COGS) and 1.0% of total sales across industries. For discrete manufacturing, defects cost between $32.0 billion and $58.6 billion annually.

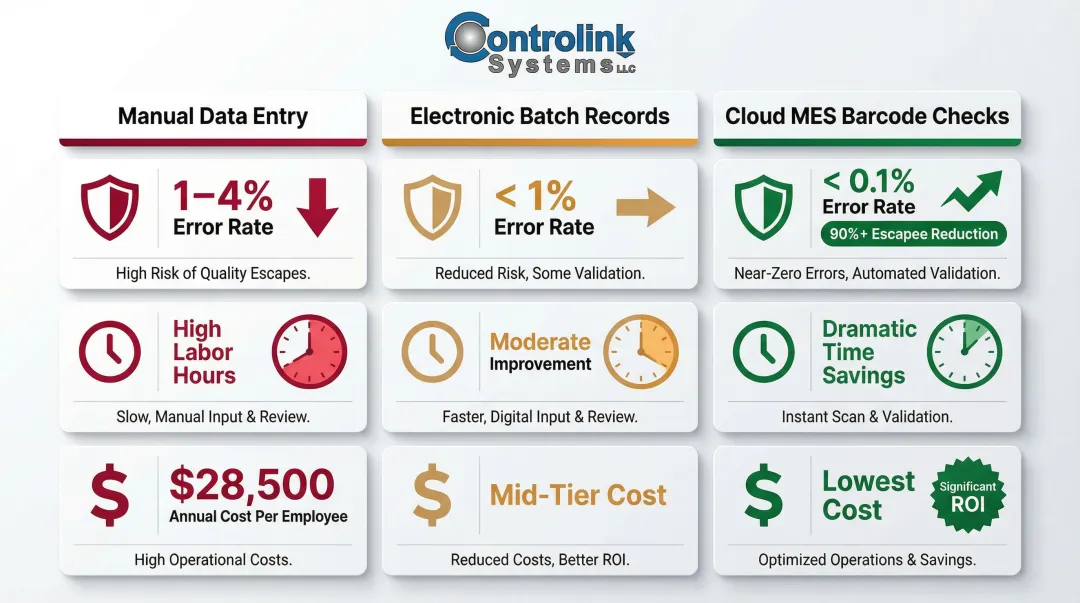

Manual vs. automated validation performance:

| Validation Method | Error Rate / Quality Impact | Time / Labor Impact | Financial Impact |

|---|---|---|---|

| Manual Data Entry | 1% to 4% error rate per field entered | 9+ hours per week per employee | Costs $28,500 per employee annually |

| Electronic Batch Records (EBR) | Eliminates manual transcription errors and enforces strict execution | Reduced QA batch review time from 6 hours to 1 hour | Enabled 250% product line growth without proportional QA headcount |

| Cloud MES Barcode Checks | Forced-quality barcode checks reduced escapees by >90% | Remote troubleshooting cut downtime by 40% | IT cost-per-line reduced by 60% |

KPIs impacted and when this matters most:

- Primary KPIs: Cost of scrap per period, downtime hours attributed to data-related errors, manual validation labor hours, cost per quality incident

- Highest impact: Enterprises managing high part volumes, complex multi-machine environments, or operations where using outdated or incorrect data (wrong program revision, wrong process parameter) directly translates to material waste and lost machine time

What Happens When Data Health Monitoring Is Missing or Ignored

Enterprises that don't monitor data health don't eliminate data problems—they just encounter them later, when the cost of fixing them is higher.

Common consequences include:

- Acting on inaccurate reports that reflect data entry errors rather than real performance

- Operators using outdated process files or parameters because no system flagged the discrepancy

- Quality issues reaching customers because the data chain that should have caught them was never verified

The Compounding Cost of Reactive Data Management

When data problems surface only after affecting production, the response requires investigation, rework, potential scrap, and in regulated industries, possible compliance violations or audit failures. Reactive data management keeps teams perpetually behind—and the costs compound with every delay.

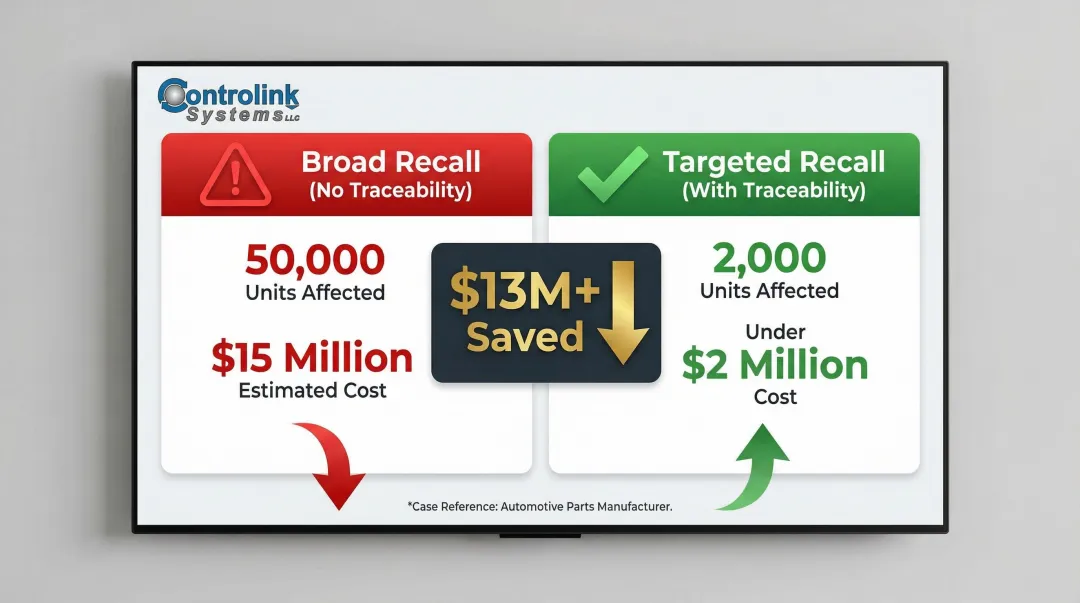

Product recalls typically cost firms $10 million to $50 million in direct expenses, including consumer reimbursements, scrap, rework, and warranty costs. Without precise traceability, manufacturers are forced to issue broad recalls. In one case, a global automotive parts manufacturer used end-to-end product traceability to identify affected products within minutes; they executed a targeted recall affecting 2,000 units instead of 50,000, dropping direct recall costs from an estimated $15 million to under $2 million.

Quality Escapes Driven by "Invisible" Data Errors

The FDA has recalled medical devices due to data and instruction errors. A Thermo Fisher dynamic bath was recalled because incorrect assembly caused fluid temperatures to exceed the user's set point. In a separate case, Siemens ADVIA calibrators were recalled due to incorrect platelet value assignments. Both failures trace back to data errors that went undetected until products reached the field.

Scaling Risk

As enterprises grow, add machines, and expand product lines, unmonitored data degrades faster. A manageable inconsistency at small scale becomes a systemic reliability problem at enterprise scale.

The numbers reflect how widespread this vulnerability is: only 3% of companies' data meets basic quality standards, and 70% of manufacturers still collect data manually. That combination means data quality will deteriorate as complexity increases—unless monitoring is in place to catch it.

How to Get the Most Value from Data Health Monitoring Tools

The tools deliver value when they're configured against outcomes, not just turned on.

Define What "Healthy Data" Looks Like

For each data source in the enterprise, establish:

- What ranges are acceptable for numerical values

- What fields are mandatory and cannot be null

- What consistency rules apply across related systems

- What timeliness standards must be met (e.g., sensor data must be no more than 5 minutes old)

Without defined thresholds, monitoring generates noise rather than actionable signal.

Act on Monitoring Insights Consistently

The value of a monitoring tool is realized when its alerts trigger corrections, not just documentation. Organizations should establish clear workflows for:

- Who receives alerts (not just IT teams—route to shop-floor personnel who can act)

- Who is responsible for resolution

- What constitutes acceptable response time

- How corrections are logged and reviewed

For manufacturing environments, this means connecting data health events to shop-floor workflows. If a CNC program revision mismatch is detected, the alert should reach the machinist or production supervisor immediately, not sit in an IT queue.

Review and Calibrate Regularly

Business processes, product requirements, and regulatory standards change over time. Review data health thresholds at minimum quarterly, and also whenever any of these occur:

- New product introductions

- Process or workflow changes

- System or software upgrades

- Updates to regulatory requirements

Regular calibration keeps monitoring aligned with current operational realities rather than yesterday's assumptions.

Conclusion

Data health monitoring tools give enterprises control over the information their operations depend on. The payoff is concrete:

- Accurate data reduces scrap and rework before it compounds

- Consistent records shorten decision cycles across teams

- Timely monitoring lowers costs by catching errors at the source

- Proactive visibility protects against compliance exposure

Data health monitoring is an ongoing operational discipline, not a one-time setup. Enterprises that review and act on monitoring insights continuously build a data foundation that scales with the business rather than becoming a liability as complexity grows.

The difference between a $1 fix at the source and a $100 failure downstream is whether monitoring is in place to catch the error before it cascades.

Frequently Asked Questions

What are the benefits of data health monitoring tools for enterprises?

Data health monitoring tools deliver early error detection, improved decision-making, reduced operational costs, lower scrap and rework, and compliance support. Benefits compound over time as consistent monitoring builds a more reliable foundation for production decisions.

What types of data should manufacturing enterprises prioritize monitoring?

Prioritize CNC program files and revisions, process parameter data from sensors and controllers, production and quality logs, test results, and any data flowing between PLCs, MES, and ERP systems. These data sources directly influence production outcomes and quality.

How does data health monitoring help reduce scrap and downtime?

Monitoring catches incorrect or outdated data—such as a wrong program revision or an out-of-spec process parameter—before it reaches the machine or influences a production decision. This prevents the scrap events and unplanned stoppages that follow when bad data drives operations.

How do data health monitoring tools support regulatory compliance for enterprises?

Monitoring tools maintain audit trails, enforce data conformity rules, and flag non-compliant records in real time. For aerospace, automotive, and medical device manufacturers, this makes demonstrating data integrity during audits straightforward and reduces exposure to regulatory fines.

What is the difference between data monitoring and data quality management?

Data monitoring is the continuous, real-time process of checking whether data meets defined standards. Data quality management is the broader discipline that includes policies, governance, and correction workflows.

How often should enterprises review their data health monitoring rules and thresholds?

Review rules and thresholds at minimum quarterly or whenever significant changes occur—such as new product introductions, process changes, system upgrades, or updates to regulatory requirements. Regular reviews prevent rules from drifting out of sync with how your shop actually runs.